Mar 3, 2026

AI Agent Projects in 2026: 5 Things to Do Now - Modern Business Workers

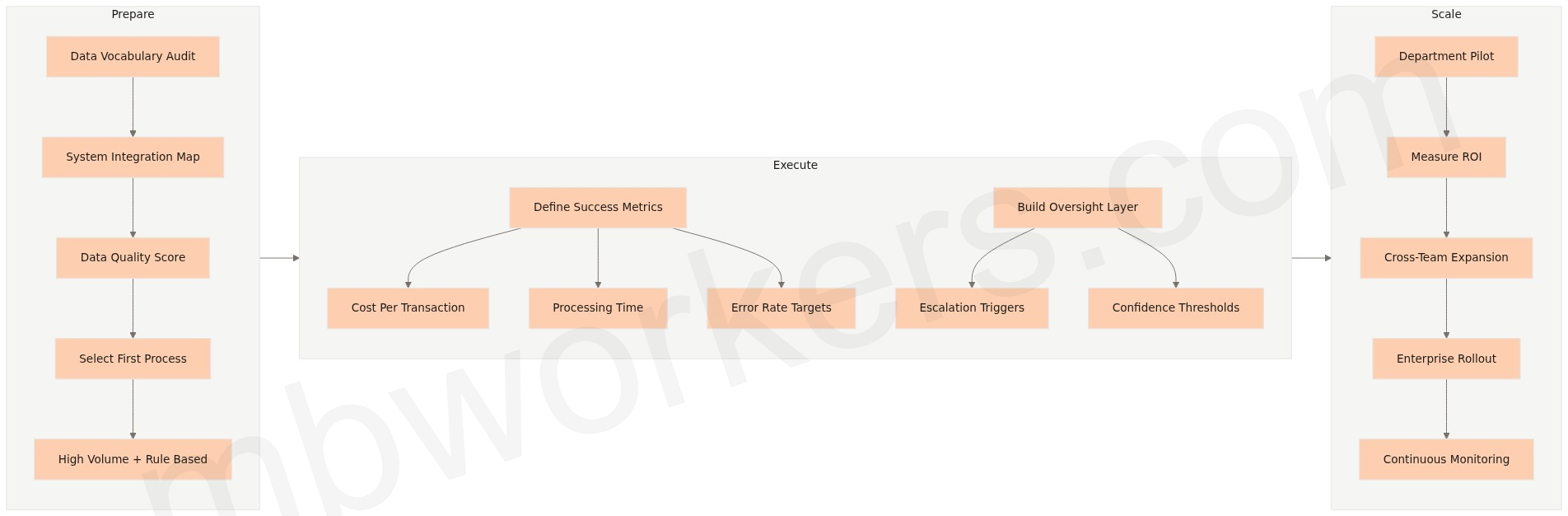

70 to 80 percent of AI agent projects never reach enterprise scale. According to Accenture research and analysis published by industry analysis), the gap between AI agent enthusiasm and actual results is not a technology problem. It is a preparation problem. Five concrete actions taken now, from auditing your data vocabulary to defining oversight structures, can determine whether your AI agent investment delivers measurable returns in 2026 or joins the majority that stall.

Table of Contents

- Why Do Most AI Agent Projects Fail Before They Scale?

- Step 1: Map the Data Your AI Agents Will Need

- Step 2: Identify the Right First Process for Agent Deployment

- Step 3: Define Success Metrics Before You Build

- Step 4: Build the Human Oversight Layer Intentionally

- Step 5: Build a Scaling Path Before You Need One

- How Should SMEs Approach AI Agent Deployment in 2026?

Why Do Most AI Agent Projects Fail Before They Scale?

The failure rate for agentic AI implementations is not a fringe statistic. According to analysis published by Beam AI), roughly 90% of implementations encounter serious obstacles within the first six months. The root causes appear consistently across industries and company sizes.

Key Takeaways:

- According to Gartner, 40% of enterprise applications will embed task-specific AI agents by 2026, up from less than 5% in 2025, making the window for early-mover advantage narrow but real.

- The primary reason AI agent projects fail is not flawed technology: it is incomplete data foundations, vague success metrics, and pilots that never scale beyond a single department.

- Intelligent document processing is the critical first investment: without structured, reliable data flowing into your agents, accuracy and confidence collapse at the task level.

- SMEs that start with a single high-volume, high-friction process and expand methodically consistently outperform organizations that attempt broad, simultaneous deployment.

The most common failure pattern is treating AI agents like traditional automation. Conventional RPA follows rigid, rule-based scripts. AI agents are probabilistic: they interpret context, handle variation, and make judgment calls. Organizations that apply the same governance model to both end up with agents that either fail silently or require constant human intervention to correct outputs.

The second failure pattern is the pilot trap. A proof-of-concept runs in one department, produces encouraging results, and then sits there. According to Accenture research, only 16% of organizations successfully shift from AI experimentation to robust scaling. The problem is rarely budget. It is the absence of a clear path from departmental success to cross-functional deployment, including data access, integration, and change management.

The third pattern is vague success criteria. “Improve productivity” is not a metric. “Reduce invoice processing time from eight days to two, at 99.5% accuracy” is. Without specific targets, there is no way to measure progress, justify continued investment, or identify where agents are underperforming.

Step 1: Map the Data Your AI Agents Will Need

AI agents are only as reliable as the data feeding them. Every business process generates documents: invoices, contracts, purchase orders, customer emails, service requests. Most of this content exists in unstructured or semi-structured formats that agents cannot directly interpret. When the underlying data is incomplete or inconsistent, agent accuracy degrades and human override rates climb.

A useful starting exercise is what practitioners call a data vocabulary audit, sometimes described as a mathematical terms word search applied to your operational data, meaning a systematic cataloguing of every field name, label, and variable your agents will need to read, interpret, and act on. This is not an abstract exercise. It surfaces naming inconsistencies (“invoice_date” in one system, “InvDt” in another), missing fields, and format mismatches before they cause agent failures in production.

Intelligent document processing (IDP) solves the extraction problem by combining optical character recognition, natural language processing, and machine learning to extract and structure data from documents automatically. According to Uhura Solutions, AI-driven document processing can reduce manual extraction effort by up to 80% while achieving accuracy rates near 99%. For SMEs, that translates directly to faster cycle times and fewer errors entering downstream systems.

The practical approach is to start narrow. Choose one high-volume document type, such as supplier invoices or customer intake forms, validate extraction quality against a sample of real documents, and expand from there. Spiralscout’s analysis of AI document ingestion recommends normalizing extracted data to a consistent format (JSON works well) and routing low-confidence extractions to a human reviewer rather than letting errors propagate automatically. This human-in-the-loop checkpoint is not a workaround: it is what keeps the data foundation reliable enough for agents to act on confidently.

Step 2: Identify the Right First Process for Agent Deployment

Not every process is a good starting point for AI agents. The best candidates share three characteristics: high transaction volume, clear decision rules, and measurable outcomes. Processes that require frequent exceptions, regulatory sign-off on every step, or deep institutional judgment are better suited for later phases.

For most SMEs, the strongest first-use cases fall into a predictable set: first-line customer support, invoice processing and matching, employee onboarding document collection, and sales inquiry routing. According to Salesmate’s 2026 AI agent adoption statistics, 30 to 35% of mid-to-large enterprises already use AI agents for first-line customer support, with those agents handling 50 to 65% of inquiries without human intervention and reducing resolution time by 25 to 40%.

The selection criteria matter more than the specific process. Pick something that currently consumes significant staff hours, has outputs that are easy to verify, and connects to a system your team already uses. That last point is underappreciated: agents that integrate with existing CRM, ERP, or helpdesk platforms show faster time-to-value than those requiring new infrastructure. For SMEs evaluating custom AI agents built on platforms like Claude, the integration question is often the deciding factor in deployment timelines.

Step 3: Define Success Metrics Before You Build

The organizations that scale AI agents successfully share one habit: they define what success looks like before the first line of configuration is written. This is not project management formality. It is the mechanism that separates pilots that justify continued investment from pilots that get quietly shelved.

Effective metrics for AI agent deployments are specific, time-bound, and tied to business outcomes rather than technical performance. Agent response accuracy is a useful internal measure, but the metric that gets executive attention is “reduced cost per transaction” or “headcount redeployed to higher-value work.” Deloitte’s analysis of AI agent deployments found that organizations framing AI investment in outcome terms secure faster budget approval and clearer accountability structures.

A practical starting framework for SMEs:

| Metric Type | Example | Why It Matters |

|---|---|---|

| Volume | Invoices processed per day | Establishes baseline and measures throughput gain |

| Accuracy | Error rate before vs. after | Quantifies quality improvement and reduces rework |

| Speed | Processing time per transaction | Direct proxy for cost reduction |

| Human override rate | % of agent decisions reviewed | Tracks agent confidence and calibration over time |

| Staff reallocation | Hours freed per week | Translates to concrete ROI for leadership |

Set targets for each metric at the outset. Review them at 30 and 90 days. If the agent is not meeting targets, the metrics tell you where to look: accuracy problems usually point back to data quality, while volume problems often indicate integration or workflow bottlenecks.

Step 4: Build the Human Oversight Layer Intentionally

AI agents operating without human oversight structures create two distinct risks: silent errors that accumulate undetected, and security exposure from agents with excessive system permissions. Both risks are manageable, but only if oversight is designed in from the beginning rather than retrofitted after problems emerge.

According to Gravitee’s 2026 State of AI Agent Security report, 81% of organizations deploying AI agents have moved beyond the planning phase on security, but only 14.4% have full security approval in place. That gap between deployment speed and governance readiness is where most incidents originate.

For SMEs, the practical oversight structure has three components. First, define which decisions the agent can make autonomously and which require human confirmation. High-stakes or irreversible actions (payment releases, contract execution, customer account changes) should always route to a human checkpoint. Second, establish exception queues: when an agent encounters a case outside its confidence threshold, it should flag it for review rather than guess. Third, audit agent activity on a regular cadence. Reviewing a sample of agent decisions weekly during the first 90 days catches calibration problems before they scale.

Pearson’s deployment of AI tools across 17,000 employees illustrates the value of structured rollout. Rather than a blanket deployment, Pearson used job-specific training cohorts, grouping marketing teams together and engineering teams together. The result was 5,000 employees completing AI accreditation in under a year, with 3,000 signing up in the first month. The structure reduced resistance and built the kind of human-AI collaboration habits that make oversight sustainable.

Step 5: Build a Scaling Path Before You Need One

The pilot-to-scale gap is where most AI agent investments stall. A single department runs a successful proof-of-concept. The results are positive. Then the question becomes: how do we extend this to three departments, or five? Without a pre-defined scaling path, that question triggers months of re-architecture, re-negotiation, and re-training.

The organizations that avoid this pattern treat the pilot as a template, not a one-off. During the initial deployment, they document every integration point, every exception type, every human override decision. That documentation becomes the playbook for the second and third deployments. Workday’s guidance for small businesses adopting AI emphasizes this documentation discipline as the primary driver of faster subsequent rollouts.

Part of that documentation work involves a systematic review of every variable name, field definition, and data label across the systems the agent will touch as it expands, the same kind of data vocabulary audit described in Step 1, applied now to your broader architecture. Inconsistencies that are harmless in a single-department pilot become compounding errors when the same agent operates across finance, operations, and customer service simultaneously.

For SMEs specifically, the cost structure of scaling matters. Technova Partners’ analysis of AI agent implementation costs shows that medium-complexity projects with two to three integrations typically run between £20,000 and £36,000 for firms in the 10 to 50 employee range, well below the £120,000 plus cost of enterprise-scale deployments. Starting with a contained, well-documented pilot keeps initial investment proportionate while building the institutional knowledge needed to expand efficiently.

The scaling plan should also address data governance. As agents expand across departments, they access more systems and more sensitive data. Defining data access boundaries at the pilot stage, rather than retrofitting them at scale, prevents the security gaps that the Gravitee report identifies as the primary risk in rapid AI agent adoption.

How Should SMEs Approach AI Agent Deployment in 2026?

SMEs starting AI agent deployment in 2026 have a narrower window than early enterprise adopters, but a clearer playbook. The practical priority is sequencing: data foundation first, single high-volume process second, defined metrics before build, oversight by design, and a documented scaling path from day one. Organizations that follow this sequence consistently reach production faster and with fewer costly restarts.

These five steps share a common logic: AI agents succeed when the environment they operate in is designed for them, not retrofitted around them. The 70 to 80% of projects that fail are not failing because the technology is inadequate. They are failing because the data is messy, the success criteria are vague, the oversight is absent, and the path from pilot to production was never drawn.

The organizations seeing real results in 2026, including early adopters across financial services, logistics, and professional services, are not necessarily the ones with the largest AI budgets. They are the ones that treated the groundwork as the investment, not the agent itself.

That distinction, between organizations that prepared the environment and those that simply deployed the technology, is precisely what separates the 16% that scale from the 84% that don’t.

Frequently Asked Questions

Why do most AI agent projects fail to scale?

According to Beam AI analysis, roughly 90% of agentic AI implementations hit serious obstacles within six months. The root causes are consistently incomplete data foundations, vague success metrics, and pilots that never expand beyond a single department – not flawed technology.

How is an AI agent different from traditional RPA automation?

Unlike RPA, which follows rigid rule-based scripts, AI agents are probabilistic. They interpret context, handle variation, and make judgment calls. Applying the same governance model to both typically results in agents that fail silently or require constant human correction.

What is the best first step for SMEs adopting AI agents in 2026?

Start with a single high-volume, high-friction process rather than broad simultaneous deployment. SMEs that expand methodically from one clear use case consistently outperform organizations that try to scale across multiple departments at once.

How fast is enterprise AI agent adoption growing?

According to Gartner, 40% of enterprise applications will embed task-specific AI agents by 2026, up from less than 5% in 2025. That rapid shift makes the early-mover window narrow but meaningful for SMEs that prepare now.